PRINCIPLES OF ASR TECHNOLOGY………………..……………….………..3

PERFORMANCE AND DESIGN ISSUES IN SPEECH APPLICATIONS……...7

CURRENT TRENDS IN VOICE-INTERACTIVE CALL………….…….………8

FUTURE TRENDS IN VOICE-INTERACTIVE CALL…….…..…………....…13

DEFINING AND ACQUIRING LITERACY IN THE AGE OF INFORMATION…………………………………….………………………..…..14

CONTENT-BASED INSTRUCTION AND LITERACY DEVELOPMENT…..15

THEORY INTO PRACTICE…………….……………………………………….17

CONCLUSION………………………………………………………...…………17

REFERENCES……………………………………………………………………18

INTRODUCTION

During the past two decades, the exercise of spoken language skills has received increasing attention among educators. Foreign language curricula focus on productive skills with special emphasis on communicative competence. Students' ability to engage in meaningful conversational interaction in the target language is considered an important, if not the most important, goal of second language education. This shift of emphasis has generated a growing need for instructional materials that provide an opportunity for controlled interactive speaking practice outside the classroom.

With recent advances in multimedia technology, computer-aided language learning (CALL) has emerged as a tempting alternative to traditional modes of supplementing or replacing direct student-teacher interaction, such as the language laboratory or audio-tape-based self-study. The integration of sound, voice interaction, text, video, and animation has made it possible to create self-paced interactive learning environments that promise to enhance the classroom model of language learning significantly. A growing number of textbook publishers now offer educational software of some sort, and educators can choose among a large variety of different products. Yet, the practical impact of CALL in the field of foreign language education has been rather modest. Many educators are reluctant to embrace a technology that still seeks acceptance by the language teaching community as a whole (Kenning & Kenning, 1990).

A number of reasons have been cited for the limited practical impact of computer-based language instruction. Among them are the lack of a unified theoretical framework for designing and evaluating CALL systems (Chapelle, 1997; Hubbard, 1988; Ng & Olivier, 1987); the absence of conclusive empirical evidence for the pedagogical benefits of computers in language learning (Chapelle, 1997; Dunkel, 1991; Salaberry, 1996); and finally, the current limitations of the technology itself (Holland, 1995; Warschauer, 1996). The rapid technological advances of the 1980s have raised both the expectations and the demands placed on the computer as a potential learning tool. Educators and second language acquisition (SLA) researchers alike are now demanding intelligent, user-adaptive CALL systems that offer not only sophisticated diagnostic tools, but also effective feedback mechanisms capable of focusing the learner on areas that need remedial practice. As Warschauer puts it, a computerized language teacher should be able to understand a user's spoken input and evaluate it not just for correctness but also for appropriateness. It should be able to diagnose a student's problems with pronunciation, syntax, or usage, and then intelligently decide among a range of options (e.g., repeating, paraphrasing, slowing down, correcting, or directing the student to background explanations). (Warschauer, 1996, p. 6)

Salaberry (1996) demands nothing short of a system capable of simulating the complex socio-communicative competence of a live tutor--in other words, the linguistic intelligence of a human--only to conclude that the attempt to create an "intelligent language tutoring system is a fallacy" (p. 11). Because speech technology isn't perfect, it is of no use at all. If it "cannot account for the full complexity of human language," why even bother modeling more constrained aspects of language use (Higgins, 1988, p. vii)? This sort of all-or-nothing reasoning seems symptomatic of much of the latest pedagogical literature on CALL. The quest for a theoretical grounding of CALL system design and evaluation (Chapelle, 1997) tends to lead to exaggerated expectations as to what the technology ought to accomplish. When combined with little or no knowledge of the underlying technology, the inevitable result is disappointment.

PRINCIPLES OF ASR TECHNOLOGYConsider the following four scenarios:

1. A court reporter listens to the opening arguments of the defense and types the words into a steno-machine attached to a word-processor.

2. A medical doctor activates a dictation device and speaks his or her patient's name, date of birth, symptoms, and diagnosis into the computer. He or she then pushes "end input" and "print" to produce a written record of the patient's diagnosis.

3. A mother tells her three-year old, "Hey Jimmy, get me my slippers, will you?" The toddler smiles, goes to the bedroom, and returns with papa's hiking boots.

4. A first-grader reads aloud a sentence displayed by an automated Reading Tutor. When he or she stumbles over a difficult word, the system highlights the word, and a voice reads the word aloud. The student repeats the sentence--this time correctly--and the system responds by displaying the next sentence.

At some level, all four scenarios involve speech recognition. An incoming speech signal elicits a response from a "listener." In the first two instances, the response consists of a written transcript of the spoken input, whereas in the latter two cases, an action is performed in response to a spoken command. In all four cases, the "success" of the voice interaction is relative to a given task as embodied in a set of expectations that accompany the input. The interaction succeeds when the response--by a machine or human "listener"--matches these expectations.

Recognizing and understanding human speech requires a considerable amount of linguistic knowledge: a command of the phonological, lexical, semantic, grammatical, and pragmatic conventions that constitute a language. The listener's command of the language must be "up" to the recognition task or else the interaction fails. Jimmy returns with the wrong items, because he cannot yet verbally discriminate between different kinds of shoes. Likewise, the reading tutor would miserably fail in performing the court-reporter's job or transcribing medical patient information, just as the medical dictation device would be a poor choice for diagnosing a student's reading errors. On the other hand, the human court reporter--assuming he or she is an adult native speaker--would have no problem performing any of the tasks mentioned under (1) through (4). The linguistic competence of an adult native speaker covers a broad range of recognition tasks and communicative activities. Computers, on the other hand, perform best when designed to operate in clearly circumscribed linguistic sub-domains.

Humans and machines process speech in fundamentally different ways (Bernstein & Franco, 1996). Complex cognitive processes account for the human ability to associate acoustic signals with meanings and intentions. For a computer, on the other hand, speech is essentially a series of digital values. However, despite these differences, the core problem of speech recognition is the same for both humans and machines: namely, of finding the best match between a given speech sound and its corresponding word string. Automatic speech recognition technology attempts to simulate and optimize this process computationally.

Since the early 1970s, a number of different approaches to ASR have been proposed and implemented, including Dynamic Time Warping, template matching, knowledge-based expert systems, neural nets, and Hidden Markov Modeling (HMM) (Levinson & Liberman, 1981; Weinstein, McCandless, Mondshein, & Zue, 1975; for a review, see Bernstein & Franco, 1996). HMM-based modeling applies sophisticated statistical and probabilistic computations to the problem of pattern matching at the sub-word level. The generalized HMM-based approach to speech recognition has proven an effective, if not the most effective, method for creating high-performance speaker-independent recognition engines that can cope with large vocabularies; the vast majority of today's commercial systems deploy this technique. Therefore, we focus our technical discussion on an explanation of this technique.

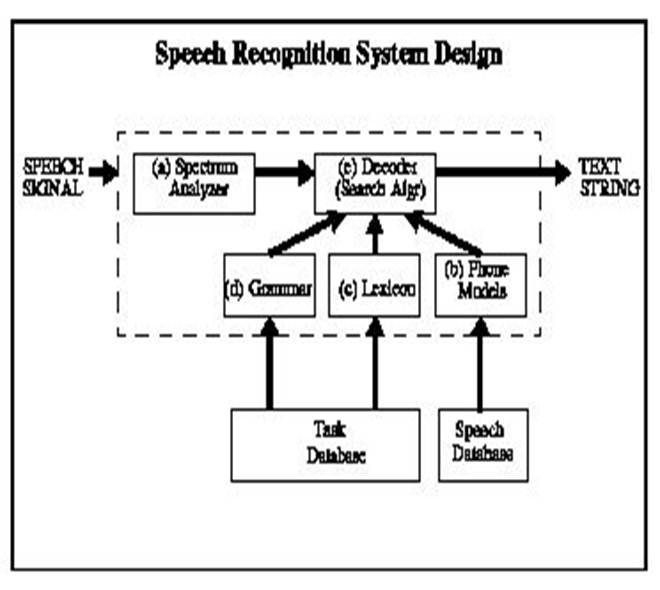

An HMM-based speech recognizer consists of five basic components: (a) an acoustic signal analyzer which computes a spectral representation of the incoming speech; (b) a set of phone models (HMMs) trained on large amounts of actual speech data; (c) a lexicon for converting sub-word phone sequences into words; (d) a statistical language model or grammar network that defines the recognition task in terms of legitimate word combinations at the sentence level; (e) a decoder, which is a search algorithm for computing the best match between a spoken utterance and its corresponding word string. Figure 1 shows a schematic representation of the components of a speech recognizer and their functional interaction.

Figure 1. Components of a speech recognition device

A. Signal AnalysisThe first step in automatic speech recognition consists of analyzing the incoming speech signal. When a person speaks into an ASR device--usually through a high quality noise-canceling microphone--the computer samples the analog input into a series of 16- or 8-bit values at a particular sampling frequency (ranging from 8 to 22KHz). These values are grouped together in predetermined overlapping temporal intervals called "frames." These numbers provide a precise description of the speech signal's amplitude. In a second step, a number of acoustically relevant parameters such as energy, spectral features, and pitch information, are extracted from the speech signal (for a visual representation of some of these parameters, see Figure 2 on page 53). During training, this information is used to model that particular portion of the speech signal. During recognition, this information is matched against the pre-existing model of the signal.

B. Phone ModelsTraining a machine to recognize spoken language amounts to modeling the basic sounds of speech (phones). Automatic speech recognition strings together these models to form words. Recognizing an incoming speech signal involves matching the observed acoustic sequence with a set of HMM models. An HMM can model either phones or other sub-word units or it can model words or even whole sentences. Phones are either modeled as individual sounds--so-called monophones--or as phone combinations that model several phones and the transitions between them (biphones or triphones). After comparing the incoming acoustic signal with the HMMs representing the sounds of language, the system computes a hypothesis based on the sequence of models that most closely resembles the incoming signal. The HMM model for each linguistic unit (phone or word) contains a probabilistic representation of all the possible pronunciations for that unit--just as the model of the handwritten cursive b would have many different representations. Building HMMs--a process called training--requires a large amount of speech data of the type the system is expected to recognize. Large-vocabulary speaker-independent continuous dictation systems are typically trained on tens of thousands of read utterances by a cross-section of the population, including members of different dialect regions and age-groups. As a general rule, an automatic speech recognizer cannot correctly process speech that differs in kind from the speech it has been trained on. This is why most commercial dictation systems, when trained on standard American English, perform poorly when encountering accented speech, whether by non-native speakers or by speakers of different dialects. We will return to this point in our discussion of voice-interactive CALL applications.

C. LexiconThe lexicon, or dictionary, contains the phonetic spelling for all the words that are expected to be observed by the recognizer. It serves as a reference for converting the phone sequence determined by the search algorithm into a word. It must be carefully designed to cover the entire lexical domain in which the system is expected to perform. If the recognizer encounters a word it does not "know" (i.e., a word not defined in the lexicon), it will either choose the closest match or return an out-of-vocabulary recognition error. Whether a recognition error is registered as a misrecognition or an out-of-vocabulary error depends in part on the vocabulary size. If, for example, the vocabulary is too small for an unrestricted dictation task--let's say less than 3K--the out-of-vocabulary errors are likely to be very high. If the vocabulary is too large, the chance of misrecognition errors increases because with more similar-sounding words, the confusability increases. The vocabulary size in most commercial dictation systems tends to vary between 5K and 60K.

D. The Language ModelThe language model predicts the most likely continuation of an utterance on the basis of statistical information about the frequency in which word sequences occur on average in the language to be recognized. For example, the word sequence A bare attacked him will have a very low probability in any language model based on standard English usage, whereas the sequence A bear attacked him will have a higher probability of occurring. Thus the language model helps constrain the recognition hypothesis produced on the basis of the acoustic decoding just as the context helps decipher an unintelligible word in a handwritten note. Like the HMMs, an efficient language model must be trained on large amounts of data, in this case texts collected from the target domain.

In ASR applications with constrained lexical domain and/or simple task definition, the language model consists of a grammatical network that defines the possible word sequences to be accepted by the system without providing any statistical information. This type of design is suitable for CALL applications in which the possible word combinations and phrases are known in advance and can be easily anticipated (e.g., based on user data collected with a system pre-prototype). Because of the a priori constraining function of a grammar network, applications with clearly defined task grammars tend to perform at much higher accuracy rates than the quality of the acoustic recognition would suggest.

E. DecoderSimply put, the decoder is an algorithm that tries to find the utterance that maximizes the probability that a given sequence of speech sounds corresponds to that utterance. This is a search problem, and especially in large vocabulary systems careful consideration must be given to questions of efficiency and optimization, for example to whether the decoder should pursue only the most likely hypothesis or a number of them in parallel (Young, 1996). An exhaustive search of all possible completions of an utterance might ultimately be more accurate but of questionable value if one has to wait two days to get a result. Trade-offs are therefore necessary to maximize the search results while at the same time minimizing the amount of CPU and recognition time.

PERFORMANCE AND DESIGN ISSUES IN SPEECH APPLICATIONSFor educators and developers interested in deploying ASR in CALL applications, perhaps the most important consideration is recognition performance: How good is the technology? Is it ready to be deployed in language learning? These questions cannot be answered except with reference to particular applications of the technology, and therefore touch on a key issue in ASR development: the issue of human-machine interface design.

As we recall, speech recognition performance is always domain specific--a machine can only do what it is programmed to do, and a recognizer with models trained to recognize business news dictation under laboratory conditions will be unable to handle spontaneous conversational speech transmitted over noisy telephone channels. The question that needs to be answered is therefore not simply "How good is ASR technology?" but rather, "What do we want to use it for?" and "How do we get it to perform the task?"

In the following section, we will address the issue of system performance as it relates to a number of successful commercial speech applications. By emphasizing the distinction between recognizer performance on the one hand--understood in terms of "raw" recognition accuracy--and system performance on the other; we suggest how the latter can be optimized within an overall design that takes into account not only the factors that affect recognizer performance as such, but also, and perhaps even more importantly, considerations of human-machine interface design.

Historically, basic speech recognition research has focused almost exclusively on optimizing large vocabulary speaker-independent recognition of continuous dictation. A major impetus for this research has come from US government sponsored competitions held annually by the Defense Advanced Research Projects Agency (DARPA). The main emphasis of these competitions has been on improving the "raw" recognition accuracy--calculated in terms of average omissions, insertions, and substitutions--of large-vocabulary continuous speech recognizers (LVCSRs) in the task of recognizing read sentence material from a number of standard sources (e.g., The Wall Street Journal or The New York Times). The best laboratory systems that participated in the WSJ large-vocabulary continuous dictation task have achieved word error rates as low as 5%, that is, on average, one recognition error in every twenty words (Pallet, 1994).

CURRENT TRENDS IN VOICE-INTERACTIVE CALLIn recent years, an increasing number of speech laboratories have begun deploying speech technology in CALL applications. Results include voice-interactive prototype systems for teaching pronunciation, reading, and limited conversational skills in semi-constrained contexts. Our review of these applications is far from exhaustive. It covers a select number of mostly experimental systems that explore paths we found promising and worth pursuing. We will discuss the range of voice-interactions these systems offer for practicing certain language skills, explain their technical implementation, and comment on the pedagogical value of these implementations. Apart from giving a brief system overview, we report experimental results if available and provide an assessment of how far away the technology is from being deployed in the commercial and educational environments.

Pronunciation TrainingA useful and remarkably successful application of speech recognition and processing technology has been demonstrated by a number of research and commercial laboratories in the area of pronunciation training. Voice-interactive pronunciation tutors prompt students to repeat spoken words and phrases or to read aloud sentences in the target language for the purpose of practicing both the sounds and the intonation of the language. The key to teaching pronunciation successfully is corrective feedback, more specifically, a type of feedback that does not rely on the student's own perception. A number of experimental systems have implemented automatic pronunciation scoring as a means to evaluate spoken learner productions in terms of fluency, segmental quality (phonemes) and supra-segmental features (intonation). The automatically generated proficiency score can then be used as a basis for providing other modes of corrective feedback. We discuss segmental and supra-segmental feedback in more detail below.

Segmental Feedback. Technically, designing a voice-interactive pronunciation tutor goes beyond the state of the art required by commercial dictation systems. While the grammar and vocabulary of a pronunciation tutor is comparatively simple, the underlying speech processing technology tends to be complex since it must be customized to recognize and evaluate the disfluent speech of language learners. A conventional speech recognizer is designed to generate the most charitable reading of a speaker's utterance. Acoustic models are generalized so as to accept and recognize correctly a wide range of different accents and pronunciations. A pronunciation tutor, by contrast, must be trained to both recognize and correct subtle deviations from standard native pronunciations.

A number of techniques have been suggested for automatic recognition and scoring of non-native speech (Bernstein, 1997; Franco, Neumeyer, Kim, & Ronen, 1997; Kim, Franco, & Neumeyer, 1997; Witt & Young, 1997). In general terms, the procedure consists of building native pronunciation models and then measuring the non-native responses against the native models. This requires models trained on both native and non-native speech data in the target language, and supplemented by a set of algorithms for measuring acoustic variables that have proven useful in distinguishing native from non-native speech. These variables include response latency, segment duration, inter-word pauses (in phrases), spectral likelihood, and fundamental frequency (F0). Machine scores are calculated from statistics derived from comparing non-native values for these variables to the native models.

In a final step, machine generated pronunciation scores are validated by correlating these scores with the judgment of human expert listeners. As one would expect, the accuracy of scores increases with the duration of the utterance to be evaluated. Stanford Research Institute (SRI) has demonstrated a 0.44 correlation between machine scores and human scores at the phone level. At the sentence level, the machine-human correlation was 0.58, and at the speaker level it was 0.72 for a total of 50 utterances per speaker (Franco et al., 1997; Kim et al., 1997). These results compare with 0.55, 0.65, and 0.80 for phone, utterance, and speaker level correlation between human graders. A study conducted at Entropic shows that based on about 20 to 30 utterances per speaker and on a linear combination of the above techniques, it is possible to obtain machine-human grader correlation levels as high as 0.85 (Bernstein, 1997).

Others have used expert knowledge about systematic pronunciation errors made by L2 adult learners in order to diagnose and correct such errors. One such system is the European Community project SPELL for automated assessment and improvement of foreign language pronunciation (Hiller, Rooney, Vaughan, Eckert, Laver, & Jack, 1994). This system uses advanced speech processing and recognition technologies to assess pronunciation errors by L2 learners of English (French or Italian speakers) and provide immediate corrective feedback. One technique for detecting consonant errors induced by inter-language transfer was to include students' L1 pronunciations into the grammar network. In addition to the English /th/ sound, for example, the grammar network also includes /t/ or /s/, that is, errors typical of non-native Italian speakers of English. This system, although quite simple in the use of ASR technology, can be very effective in diagnosing and correcting known problems of L1 interference. However, it is less effective in detecting rare and more idiosyncratic pronunciation errors. Furthermore, it assumes that the phonetic system of the target language (e.g., English) can be accurately mapped to the learners' native language (e.g., Italian). While this assumption may work well for an Italian learner of English, it certainly does not for a Chinese learner; that is, there are sounds in Chinese that do not resemble any sounds in English.

A system for teaching the pronunciation of Japanese long vowels, the mora nasal, and mora obstruents was recently built at the University of Tokyo. This system enables students to practice phonemic differences in Japanese that are known to present special challenges to L2 learners. It prompts students to pronounce minimal pairs (e.g., long and short vowels) and returns immediate feedback on segment duration. Based on the limited data, the system seems quite effective at this particular task. Learners quickly mastered the relevant duration cues, and the time spent on learning these pronunciation skills was well within the constraints of Japanese L2 curricula (Kawai & Hirose, 1997). However, the study provides no data on long-term effects of using the system.

Supra-segmental Feedback. Correct usage of supra-segmental features such as intonation and stress has been shown to improve the syntactic and semantic intelligibility of spoken language (Crystal, 1981). In spoken conversation, intonation and stress information not only helps listeners to locate phrase boundaries and word emphasis, but also to identify the pragmatic thrust of the utterance (e.g., interrogative vs. declarative). One of the main acoustical correlates of stress and intonation is fundamental frequency (F0); other acoustical characteristics include loudness, duration, and tempo. Most commercial signal processing software have tools for tracking and visually displaying F0 contours (see Figure 2). Such displays can and have been used to provide valuable pronunciation feedback to students. Experiments have shown that a visual F0 display of supra-segmental features combined with audio feedback is more effective than audio feedback alone (de Bot, 1983; James, 1976), especially if the student's F0 contour is displayed along with a native model. The feasibility of this type of visual feedback has been demonstrated by a number of simple prototypes (Abberton & Fourcin, 1975; Anderson-Hsieh, 1994; Hiller et al., 1994; Spaai & Hermes, 1993; Stibbard, 1996). We believe that this technology has a good potential for being incorporated into commercial CALL systems.

Other types of visual pronunciation feedback include the graphical display of a native speaker's face, the vocal tract, spectrum information, and speech waveforms (see Figure 2). Experiments have shown that a visual display of the talker improves not only word identification accuracy (Bernstein & Christian, 1996), but also speech rhythm and timing (Markham & Nagano-Madesen, 1997). A large number of commercial pronunciation tutors on the market today offer this kind of feedback. Yet others have experimented with using a real-time spectrogram or waveform display of speech to provide pronunciation feedback. Molholt (1990) and Manuel (1990) report anecdotal success in using such displays along with guidance on how to interpret the displays to improve the pronunciation of suprasegmental features in L2 learners of English. However, the authors do not provide experimental evidence for the effectiveness of this type of visual feedback. Our own experience with real-time spectrum and waveform displays suggests their potential use as pronunciation feedback provided they are presented along with other types of feedback, as well as with instructions on how to interpret the displays.

Teaching Linguistic Structures and Limited ConversationApart from supporting systems for teaching basic pronunciation and literacy skills, ASR technology is being deployed in automated language tutors that offer practice in a variety of higher-level linguistic skills ranging from highly constrained grammar and vocabulary drills to limited conversational skills in simulated real-life situations. Prior to implementing any such system, a choice needs to be made between two fundamentally different system design types: closed response vs. open response design. In both designs, students are prompted for speech input by a combination of written, spoken, or graphical stimuli. However, the designs differ significantly with reference to the type of verbal computer-student interaction they support. In closed response systems, students must choose one response from a limited number of possible responses presented on the screen. Students know exactly what they are allowed to say in response to any given prompt. By contrast, in systems with open response design, the network remains hidden and the student is challenged to generate the appropriate response without any cues from the system.

Closed Response Designs. One of the first implementations of a closed response design was the Voice Interactive Language Instruction System (VILIS) developed at SRI (Bernstein & Rtischev, 1991). This system elicits spoken student responses by presenting queries about graphical displays of maps and charts. Students infer the right answers to a set of multiple-choice questions and produce spoken responses.

A more recent prototype currently under development in SRI is the Voice Interactive Language Training System (VILTS), a system designed to foster speaking and listening skills for beginning through advanced L2 learners of French (Egan, 1996; Neumeyer et al., 1996; Rypa, 1996). The system incorporates authentic, unscripted conversational materials collected from French speakers into an engaging, flexible, and user-centered lesson architecture. The system deploys speech recognition to guide students through the lessons and automatic pronunciation scoring to provide feedback on the fluency of student responses. As far as we know, only the pronunciation scoring aspect of the system has been validated in experimental trials (Neumeyer et al., 1996).

In pedagogically more sophisticated systems, the query-response mode is highly contextualized and presented as part of a simulated conversation with a virtual interlocutor. To stimulate student interest, closed response queries are often presented in the form of games or goal-driven tasks. One commercial system that exploits the full potential of this design is TraciTalk (Courseware Publishing International, Inc., Cupertino, CA), a voice-driven multimedia CALL system aimed at more advanced ESL learners. In a series of loosely connected scenarios, the system engages students in solving a mystery. Prior to each scenario, students are given a task (e.g., eliciting a certain type of information), and they accomplish this task by verbally interacting with characters on the screen. Each voice interaction offers several possible responses, and each spoken response moves the conversation in a slightly different direction. There are many paths through each scenario, and not every path yields the desired information. This motivates students to return to the beginning of the scene and try out a different interrogation strategy. Moreover, TraciTalk features an agent that students can ask for assistance and accepts spoken commands for navigating the system. Apart from being more fun and interesting, games and task-oriented programs implicitly provide positive feedback by giving students the feeling of having solved a problem solely by communicating in the target language.

The speech recognition technology underlying closed response query implementations is very simple, even in the more sophisticated systems. For any given interaction, the task perplexity is low and the vocabulary size is comparatively small. As a result, these systems tend to be very robust. Recognition accuracy rates in the low to upper 90% range can be expected depending on task definition, vocabulary size, and the degree of non-native disfluency.

FUTURE TRENDS IN VOICE-INTERACTIVE CALLIn the previous sections, we reviewed the current state of speech technology, discussed some of the factors affecting recognition performance, and introduced a number of research prototypes that illustrate the range of speech-enabled CALL applications that are currently technically and pedagogically feasible. With the exception of a few exploratory open response dialog systems, most of these systems are designed to teach and evaluate linguistic form (pronunciation, fluency, vocabulary study, or grammatical structure). This is no coincidence. Formal features can be clearly identified and integrated into a focused task design. This means that robust performance can be expected. Furthermore, mastering linguistic form remains an important component of L2 instruction, despite the emphasis on communication (Holland, 1995). Prolonged, focused practice of a large number of items is still considered an effective means of expanding and reinforcing linguistic competence (Waters, 1994). However, such practice is time consuming. CALL can automate these aspects of language training, thereby freeing up valuable class time that would otherwise be spent on drills.

While such systems are an important step in the right direction, other more complex and ambitious applications are conceivable and no doubt desirable. Imagine a student being able to access the Internet, find the language of his or her choice, and tap into a comprehensive voice-interactive multimedia language program that would provide the equivalent of an entire first year of college instruction. The computer would evaluate the student's proficiency level and design a course of study tailored to his or her needs. Or think of using the same Internet resources and a set of high-level authoring tools to put together a series of virtual encounters surrounding the task of finding an apartment in Berlin. As a minimum, one would hope that natural speech input capacity becomes a routine feature of any CALL application.

To many educators, these may still seem like distant goals, and yet we believe that they are not beyond reach. In what follows, we identify four of the most persistent issues in building speech-enabled language learning applications and suggest how they might be resolved to enable a more widespread commercial implementation of speech technology in CALL.

1. More research is necessary on modeling and predicting multi-turn dialogs.An intelligent open response language tutor must not only correctly recognize a given speech input, but in addition understand what has been said and evaluate the meaning of the utterance for pragmatic appropriateness. Automatic speech understanding requires Natural Language Processing (NLP) capabilities, a technology for extracting grammatical, semantic, and pragmatic information from written or spoken discourse. NLP has been successfully deployed in expert systems and information retrieval. One of the first voice-interactive dialog systems using NLP was the DARPA-sponsored Air Travel Information System (Pallett, 1995), which enables the user to obtain flight information and make ticket reservations over the telephone. Similar commercial systems have been implemented for automatic retrieval of weather and restaurant information, virtual environments, and telephone auto-attendants. Many of the lessons learned in developing such systems can be valuable for designing CALL applications for practicing conversational skills.

2. More and better training data are needed to support basic research on modeling non-native conversational speech.One of the most needed resources for developing open response conversational CALL applications is large corpora of non-native transcribed speech data, of both read and conversational speech. Since accents vary depending on the student's first language, separate databases must either be collected for each L1 subgroup, or a representative sample of speakers of different languages must be included in the database. Creating such databases is extremely labor and cost intensive--a phone level transcription of spontaneous conversational data can cost up to one dollar per phone. A number of multilingual conversational databases of telephone speech are publicly available through the Linguistic Data Consortium (LDC), including Switchboard (US English) and CALLHOME (English, Japanese, Spanish, Chinese, Arabic, German). Our own effort in collaboration with John Hopkins University (Byrne, Knodt, Khudanpur, & Bernstein, 1998; Knodt, Bernstein, & Todic,1998) has been to collect and model spontaneous English conversations between Hispanic natives. All of these efforts will improve our understanding of the disfluent speech of language learners and help model this speech type for the purpose of human-machine communication.

DEFINING AND ACQUIRING LITERACY IN THE AGE OF INFORMATION

Moll defined literacy as "a particular way of using language for a variety of purposes, as a sociocultural practice with intellectual significance" (1994, p. 201). While traditional definitions of literacy have focused on reading and writing, the definition of literacy today is more complex. The process of becoming literate today involves more than learning how to use language effectively; rather, the process amplifies and changes both the cognitive and the linguistic functioning of the individual in society. One who is literate knows how to gather, analyze, and use information resources to solve problems and make decisions, as well as how to learn both independently and cooperatively. Ultimately literate individuals possess a range of skills that enable them to participate fully in all aspects of modern society, from the workforce to the family to the academic community. Indeed, the development of literacy is "a dynamic and ongoing process of perpetual transformation" (Neilsen, 1989, p. 5), whose evolution is influenced by a person's interests, cultures, and experiences. Researchers have viewed literacy as a multifaceted concept for a number of years (Johns, 1997). However, succeeding in a digital, information-oriented society demands multiliteracies, that is, competence in an even more diverse set of functional, academic, critical, and electronic skills.

To be considered multiliterate, students today must acquire a battery of skills that will enable them to take advantage of the diverse modes of communication made possible by new technologies and to participate in global learning communities. Although becoming multiliterate is not an easy task for any student, it is especially difficult for ESL students operating in a second language. In their attempts to become multiliterate, ESL students must acquire linguistic competence in a new language and at the same time develop the cognitive and sociocultural skills necessary to gain access into the social, academic, and workforce environments of the 21st century. They must become functionally literate, able to speak, understand, read, and write English, as well as use English to acquire, articulate and expand their knowledge. They must also become academically literate, able to read and understand interdisciplinary texts, analyze and respond to those texts through various modes of written and oral discourse, and expand their knowledge through sustained and focused research. Further, they must become critically literate, defined here as the ability to evaluate the validity and reliability of informational sources so that they may draw appropriate conclusions from their research efforts. Finally, in our digital age of information, students must become electronically literate, able "to select and use electronic tools for communication, construction, research, and autonomous learning" (Shetzer, 1998).

Helping students develop the range of literacies they need to enter and succeed at various levels of the academic hierarchy and subsequently in the workforce requires a pedagogy that facilitates and hastens linguistic proficiency development, familiarizes students with the requirements and conventions of academic discourse, and supports the use of critical thinking and higher order cognitive processes. A large body of research conducted over the past decade (see, e.g., Benesch, 1988; Brinton, Snow, & Wesche, 1989; Crandall, 1993; Kasper, 1997a, 2000a; Pally, 2000; Snow & Brinton, 1997) has shown that content-based instruction (CBI) is highly effective in helping ESL students develop the literacies they need to be successful in academic and workforce environments.

CONTENT-BASED INSTRUCTION AND LITERACY DEVELOPMENT

CBI develops linguistic competence and functional literacy by exposing ESL learners to interdisciplinary input that consists of both "everyday" communicative and academic language (Cummins, 1981; Mohan, 1990; Spanos, 1989) and that contains a wide range of vocabulary, forms, registers, and pragmatic functions (Snow, Met, & Genesee, 1989; Zuengler & Brinton, 1997). Because content-based pedagogy encourages students to use English to gather, synthesize, evaluate, and articulate interdisciplinary information and knowledge (Pally, 1997), it also allows them to hone academic and critical literacy skills as they practice appropriate patterns of academic discourse (Kasper, 2000b) and become familiar with sociolinguistic conventions relating to audience and purpose (Soter, 1990).

The theoretical foundations supporting a content-based model of ESL instruction derive from cognitive learning theory and second language acquisition (SLA) research. Cognitive learning theory posits that in the process of acquiring literacy skills, students progress through a series of three stages, the cognitive, the associative, and the autonomous (Anderson, 1983a). Progression through these stages is facilitated by scaffolding, which involves providing extensive instructional support during the initial stages of learning and gradually removing this support as students become more proficient at the task (Chamot & O'Malley, 1994). Second language acquisition (SLA) research emphasizes that literacy development can be facilitated by providing multiple opportunities for learners to interact in communicative contexts with authentic, linguistically challenging materials that are relevant to their personal and educational goals (see, e.g., Brinton, et al., 1989; Kasper, 2000a; Krashen, 1982; Snow & Brinton, 1997; Snow, et al., 1989).

In a 1996 paper published in The Harvard Educational Review, The New London Group (NLG) advocated developing multiliteracies through a pedagogy that involves a complex interaction of four factors which they called Situated Practice, Overt Instruction, Critical Framing, and Transformed Practice. According to the NLG, becoming multiliterate requires critical engagement in relevant tasks, interaction with diverse forms of communication made possible by electronic technologies, and participation in collaborative learning contexts. Warschauer (1999) concurred and stated that a pedagogy of critical inquiry and problem solving that provides the context for "authentic and collaborative projects and analyses" (p. 16) that support and are supported by the use of electronic technologies is necessary for ESL students to acquire the linguistic, social, and technological competencies key to literacy in a digital world.

According to a 1995 report published by the United States Department of Education, "technology is an important enabler for classes organized around complex, authentic tasks" and when "used in support of challenging projects, [technology] can contribute to students' sense ... that they are using real tools for real purposes." Technology use increases students' motivation as it promotes their active engagement with language and content through authentic, challenging tasks that are interdisciplinary in nature (McGrath, 1998). Technology use also encourages students to spend more time on task. As they search for information in a hyperlinked environment, ESL students benefit from increased opportunities to process linguistic and content information. Used as a tool for learning, technology supports a level of task authenticity and complexity that fits well with the interdisciplinary work inherent in content-based instruction and that promotes the acquisition of multiliteracies.

THEORY INTO PRACTICE

These research findings suggest that in our efforts to prepare ESL students for the challenges of the academic and workforce environments of the 21st century, we should adopt a pedagogical model that incorporates information technology as an integral component and that specifically targets the development of the range of literacies deemed necessary for success in a digital, information-oriented society. This paper describes a content-based pedagogy, which I call focus discipline research (Kasper, 1998a), and presents the results of a classroom study conducted to measure the effects of focus discipline research on the development of ESL students' literacy skills.

As described here, focus discipline research puts theory into practice as it incorporates the principles of cognitive learning theory, SLA research, and the four components of the NLG's (1996) pedagogy of multiliteracies. Through pedagogical activities that provide the context for situated practice, overt instruction, critical framing, and transformed practice, focus discipline research promotes ESL students' choice of and responsibility for course content, engages them in extended practice with linguistic structures and interdisciplinary material, and encourages them to become "content experts" in a subject of their own choosing.

CONCLUSION

It can be seen that it is difficult and probably undesirable to attempt to determine the difficulty of a listening and viewing task in any absolute terms. By considering the three aspects that affect the level of difficulty, namely text, task, and context features, it is possible to identify those characteristics of tasks that can be manipulated. Having identified the variable characteristics of tasks in developing the model, it is necessary to look to the dynamic interaction among, tasks, texts, and the computer-based environment.

Task design and text selection in this model also incorporate the identification and consideration of context. Teachers can make provision for their influence on learner perception of difficulty by providing texts and tasks that range across these levels, and by ensuring that learners with lower language proficiency can ease themselves gradually into the more contextually difficult tasks. This can be achieved by reducing the level of difficulty of other parameters such as text or task difficulty, or by minimizing other aspects of contextual difficulty. Thus, for example, learners of lower proficiency who are exposed for the first time to a task based on a broadcast announcement would be provided with appropriate visual support in the form of graphics or video to reduce textual difficulty. The task type would also be kept to a low level of cognitive demand (Hoven, 1991, 1997a, 1997b).

In a CELL environment, this identification of parameters of difficulty enables task designers to develop and modify tasks on the basis of clear language pedagogy that is both learner-centred and cognitively sound. Learners are provided with the necessary information on text, task, and context to make informed choices, and are given opportunities to implement their decisions. Teachers are therefore creating a CELL environment that facilitates and encourages exploration of, and experimentation with, the choices available. Within this model, learners are then able to adjust their own learning paths through the texts and tasks, and can do this at their own pace and at their individual points of readiness. In sociocultural terms, the model provides learners with a guiding framework or community of practice within which to develop through their individual Zones of Proximal Development. The model provides them with the tools to mediate meaning in the form of software incorporating information, feedback, and appropriate help systems.

By taking account of learners' needs and making provision for learner choice in this way, one of the major advantages of using computers in language learning--their capacity to allow learners to work at their own pace and in their own time--can be more fully exploited. It then becomes our task as researchers to evaluate, with learners' assistance, the effectiveness of environments such as these in improving the their listening and viewing comprehension as well as their approaches to learning in these environments.

REFERENCES

1. Adair-Hauck, B., & Donato, R. (1994). Foreign language explanations within the zone of proximal development. The Canadian Modern Language Review 50(3), 532-557.

2. Anderson, A., & Lynch, T. (1988). Listening. Oxford: Oxford University Press.

3. Armstrong, D. F., Stokoe, W. C., & Wilcox, S. E. (1995). Gesture and the nature of language. Cambridge: University of Cambridge.

4. Arndt, H., & Janney, R. W. (1987). InterGrammar: Toward an integrative model of verbal, prosodic and kinesic choices in speech. Berlin: Mouton de Gruyter.

5. Asher, J. J. (1981). Comprehension training: The evidence from laboratory and classroom studies. In H. Winitz (Ed.), The Comprehension Approach to Foreign Language Instruction (pp. 187-222). Rowley, MA: Newbury House.

6. Bacon, S. M. (1992a). Authentic listening in Spanish: How learners adjust their strategies to the difficulty of input. Hispania 75, 29-43.

7. Bacon, S. M. (1992b). The relationship between gender, comprehension, processing strategies, cognitive and affective response in foreign language listening. Modern Language Journal 76(2), 160-178.

8. Batley, E. M., & Freudenstein, R. (Eds.). (1991). CALL for the Nineties: Computer Technology in Language Learning. Marburg, Germany: FIPLV/EUROCENTRES.

9. Ellis, R. (1985). Understanding second language acquisition. Oxford: Oxford University Press.

10. Faerch, C., & Kasper, G. (1986). The role of comprehension in second language learning. Applied Linguistics 7(3), 257-274.

11. Felder, R. M., & Henriques, E. R. (1995). Learning and teaching styles in foreign language education. Foreign Language Annals 28, 21-31.

12. Felix, U. (1995). Theater Interaktiv: multimedia integration of language and literature. On-CALL 9, 12-16.

13. Fidelman, C. (1994). In the French Body/In the German Body: Project results. Demonstrated at the CALICO '94 Annual Symposium "Human Factors." Northern Arizona University, Flagstaff, AZ.

14. Fidelman, C. G. (1997). Extending the language curriculum with enabling technologies: Nonverbal communication and interactive video. In K. A. Murphy-Judy (Ed.), NEXUS: The convergence of language teaching and reseearch using technology, pp. 28-41. Durham, NC: CALICO.

15. Fish, H. (1981). Graded activities and authentic materials for listening comprehension. In The teaching of listening comprehension. ELT Documents Special: Papers presented at the Goethe Institut Colloquium Paris 1979, pp. 107-115. London: British Council.

16. Garrigues, M. (1991). Teaching and learning languages with interactive videodisc. In M. D. Bush, A. Slaton, M. Verano, & M. E. Slayden (Eds.), Interactive videodisc: The "Why" and the "How." (CALICO Monograph Series, Vol. 2, Spring, pp. 37-43.) Provo, UT: Brigham Young Press.

17. Gassin, J. (1992). Interkinesics and Interprosodics in Second Language Acquisition. Australian Review of Applied Linguistics 15(1), 95-106.

18. Hoven, D. (1997a). Instructional design for multimedia: Towards a learner-centred CELL (Computer-Enhanced Language Learning) model. In K. A. Murphy-Judy (Ed.), NEXUS: The convergence of language teaching and research using technology, pp. 98-111. Durham, NC: CALICO.

19. Hoven, D. (1997b). Improving the management of flow of control in computer-assisted listening comprehension tasks for second and foreign language learners. Unpublished doctoral dissertation, University of Queensland, Brisbane, Australia. Retrieved July 25, 1999 from the World Wide Web: http://jcs120.jcs.uq.edu.au/~dlh/thesis/.

20. Richards, J. C. (1983). Listening comprehension: Approach, design, procedure. TESOL Quarterly 17(2), 219-240.

Похожие работы

... Intelligences, The American Prospect no.29 (November- December 1996): p. 69-75 68.Hoerr, Thomas R. How our school Applied Multiple Intelligences Theory. Educational Leadership, October, 1992, 67-768. 69.Smagorinsky, Peter. Expressions:Multiple Intelligences in the English Class. - Urbana. IL:National Council of teachers of English,1991. – 240 p. 70.Wahl, Mark. ...

0 комментариев